Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

[ad_1]

Final Up to date on November 2, 2022

We’ve arrived at some extent the place we’ve got applied and examined the Transformer encoder and decoder individually, and we could now be part of the 2 collectively into a whole mannequin. We may even see easy methods to create padding and look-ahead masks by which we’ll suppress the enter values that won’t be thought of within the encoder or decoder computations. Our finish purpose stays to use the whole mannequin to Pure Language Processing (NLP).

On this tutorial, you’ll uncover easy methods to implement the whole Transformer mannequin and create padding and look-ahead masks.

After finishing this tutorial, you’ll know:

Let’s get began.

Becoming a member of the Transformer encoder and decoder and Masking

Photograph by John O’Nolan, some rights reserved.

This tutorial is split into 4 components; they’re:

For this tutorial, we assume that you’re already accustomed to:

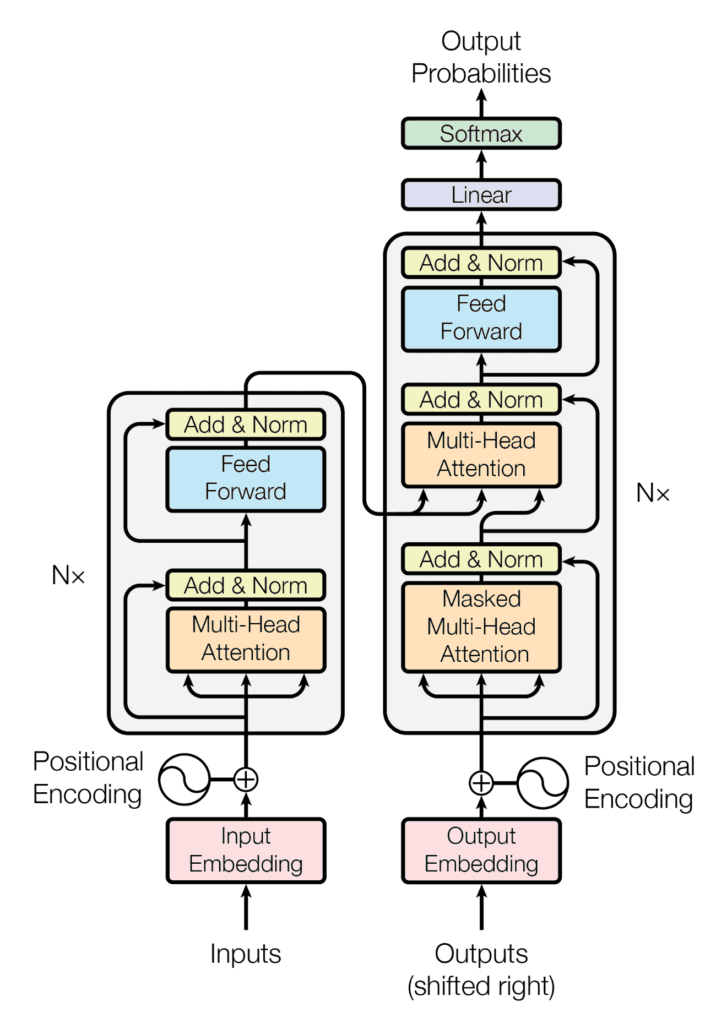

Recall having seen that the Transformer structure follows an encoder-decoder construction. The encoder, on the left-hand aspect, is tasked with mapping an enter sequence to a sequence of steady representations; the decoder, on the right-hand aspect, receives the output of the encoder along with the decoder output on the earlier time step to generate an output sequence.

The encoder-decoder construction of the Transformer structure

Taken from “Consideration Is All You Want“

In producing an output sequence, the Transformer doesn’t depend on recurrence and convolutions.

You might have seen easy methods to implement the Transformer encoder and decoder individually. On this tutorial, you’ll be part of the 2 into a whole Transformer mannequin and apply padding and look-ahead masking to the enter values.

Let’s begin first by discovering easy methods to apply masking.

Kick-start your undertaking with my ebook Constructing Transformer Fashions with Consideration. It supplies self-study tutorials with working code to information you into constructing a fully-working transformer fashions that may

translate sentences from one language to a different…

It is best to already be accustomed to the significance of masking the enter values earlier than feeding them into the encoder and decoder.

As you will note once you proceed to practice the Transformer mannequin, the enter sequences fed into the encoder and decoder will first be zero-padded as much as a particular sequence size. The significance of getting a padding masks is to make it possible for these zero values are usually not processed together with the precise enter values by each the encoder and decoder.

Let’s create the next operate to generate a padding masks for each the encoder and decoder:

|

from tensorflow import math, forged, float32

def padding_mask(enter): # Create masks which marks the zero padding values within the enter by a 1 masks = math.equal(enter, 0) masks = forged(masks, float32)

return masks |

Upon receiving an enter, this operate will generate a tensor that marks by a worth of one wherever the enter incorporates a worth of zero.

Therefore, should you enter the next array:

|

from numpy import array

enter = array([1, 2, 3, 4, 0, 0, 0]) print(padding_mask(enter)) |

Then the output of the padding_mask operate can be the next:

|

tf.Tensor([0. 0. 0. 0. 1. 1. 1.], form=(7,), dtype=float32) |

A glance-ahead masks is required to forestall the decoder from attending to succeeding phrases, such that the prediction for a selected phrase can solely rely upon identified outputs for the phrases that come earlier than it.

For this goal, let’s create the next operate to generate a look-ahead masks for the decoder:

|

from tensorflow import linalg, ones

def lookahead_mask(form): # Masks out future entries by marking them with a 1.0 masks = 1 – linalg.band_part(ones((form, form)), –1, 0)

return masks |

You’ll move to it the size of the decoder enter. Let’s make this size equal to five, for example:

Then the output that the lookahead_mask operate returns is the next:

|

tf.Tensor( [[0. 1. 1. 1. 1.] [0. 0. 1. 1. 1.] [0. 0. 0. 1. 1.] [0. 0. 0. 0. 1.] [0. 0. 0. 0. 0.]], form=(5, 5), dtype=float32) |

Once more, the one values masks out the entries that shouldn’t be used. On this method, the prediction of each phrase solely is determined by people who come earlier than it.

Let’s begin by creating the category, TransformerModel, which inherits from the Mannequin base class in Keras:

|

class TransformerModel(Mannequin): def __init__(self, enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff_inner, n, fee, **kwargs): tremendous(TransformerModel, self).__init__(**kwargs)

# Arrange the encoder self.encoder = Encoder(enc_vocab_size, enc_seq_length, h, d_k, d_v, d_model, d_ff_inner, n, fee)

# Arrange the decoder self.decoder = Decoder(dec_vocab_size, dec_seq_length, h, d_k, d_v, d_model, d_ff_inner, n, fee)

# Outline the ultimate dense layer self.model_last_layer = Dense(dec_vocab_size) ... |

Our first step in creating the TransformerModel class is to initialize cases of the Encoder and Decoder lessons applied earlier and assign their outputs to the variables, encoder and decoder, respectively. If you happen to saved these lessons in separate Python scripts, don’t forget to import them. I saved my code within the Python scripts encoder.py and decoder.py, so I have to import them accordingly.

Additionally, you will embody one remaining dense layer that produces the ultimate output, as within the Transformer structure of Vaswani et al. (2017).

Subsequent, you shall create the category technique, name(), to feed the related inputs into the encoder and decoder.

A padding masks is first generated to masks the encoder enter, in addition to the encoder output, when that is fed into the second self-attention block of the decoder:

|

... def name(self, encoder_input, decoder_input, coaching):

# Create padding masks to masks the encoder inputs and the encoder outputs within the decoder enc_padding_mask = self.padding_mask(encoder_input) ... |

A padding masks and a look-ahead masks are then generated to masks the decoder enter. These are mixed collectively by way of an element-wise most operation:

|

... # Create and mix padding and look-ahead masks to be fed into the decoder dec_in_padding_mask = self.padding_mask(decoder_input) dec_in_lookahead_mask = self.lookahead_mask(decoder_input.form[1]) dec_in_lookahead_mask = most(dec_in_padding_mask, dec_in_lookahead_mask) ... |

Subsequent, the related inputs are fed into the encoder and decoder, and the Transformer mannequin output is generated by feeding the decoder output into one remaining dense layer:

|

... # Feed the enter into the encoder encoder_output = self.encoder(encoder_input, enc_padding_mask, coaching)

# Feed the encoder output into the decoder decoder_output = self.decoder(decoder_input, encoder_output, dec_in_lookahead_mask, enc_padding_mask, coaching)

# Move the decoder output by way of a remaining dense layer model_output = self.model_last_layer(decoder_output)

return model_output |

Combining all of the steps offers us the next full code itemizing:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 |

from encoder import Encoder from decoder import Decoder from tensorflow import math, forged, float32, linalg, ones, most, newaxis from tensorflow.keras import Mannequin from tensorflow.keras.layers import Dense

class TransformerModel(Mannequin): def __init__(self, enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff_inner, n, fee, **kwargs): tremendous(TransformerModel, self).__init__(**kwargs)

# Arrange the encoder self.encoder = Encoder(enc_vocab_size, enc_seq_length, h, d_k, d_v, d_model, d_ff_inner, n, fee)

# Arrange the decoder self.decoder = Decoder(dec_vocab_size, dec_seq_length, h, d_k, d_v, d_model, d_ff_inner, n, fee)

# Outline the ultimate dense layer self.model_last_layer = Dense(dec_vocab_size)

def padding_mask(self, enter): # Create masks which marks the zero padding values within the enter by a 1.0 masks = math.equal(enter, 0) masks = forged(masks, float32)

# The form of the masks needs to be broadcastable to the form # of the eye weights that will probably be masking afterward return masks[:, newaxis, newaxis, :]

def lookahead_mask(self, form): # Masks out future entries by marking them with a 1.0 masks = 1 – linalg.band_part(ones((form, form)), –1, 0)

return masks

def name(self, encoder_input, decoder_input, coaching):

# Create padding masks to masks the encoder inputs and the encoder outputs within the decoder enc_padding_mask = self.padding_mask(encoder_input)

# Create and mix padding and look-ahead masks to be fed into the decoder dec_in_padding_mask = self.padding_mask(decoder_input) dec_in_lookahead_mask = self.lookahead_mask(decoder_input.form[1]) dec_in_lookahead_mask = most(dec_in_padding_mask, dec_in_lookahead_mask)

# Feed the enter into the encoder encoder_output = self.encoder(encoder_input, enc_padding_mask, coaching)

# Feed the encoder output into the decoder decoder_output = self.decoder(decoder_input, encoder_output, dec_in_lookahead_mask, enc_padding_mask, coaching)

# Move the decoder output by way of a remaining dense layer model_output = self.model_last_layer(decoder_output)

return model_output |

Word that you’ve carried out a small change to the output that’s returned by the padding_mask operate. Its form is made broadcastable to the form of the eye weight tensor that it’s going to masks once you practice the Transformer mannequin.

You’ll work with the parameter values specified within the paper, Consideration Is All You Want, by Vaswani et al. (2017):

|

h = 8 # Variety of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_ff = 2048 # Dimensionality of the internal totally related layer d_model = 512 # Dimensionality of the mannequin sub-layers’ outputs n = 6 # Variety of layers within the encoder stack

dropout_rate = 0.1 # Frequency of dropping the enter items within the dropout layers ... |

As for the input-related parameters, you’ll work with dummy values for now till you arrive on the stage of coaching the whole Transformer mannequin. At that time, you’ll use precise sentences:

|

... enc_vocab_size = 20 # Vocabulary dimension for the encoder dec_vocab_size = 20 # Vocabulary dimension for the decoder

enc_seq_length = 5 # Most size of the enter sequence dec_seq_length = 5 # Most size of the goal sequence ... |

Now you can create an occasion of the TransformerModel class as follows:

|

from mannequin import TransformerModel

# Create mannequin training_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, dropout_rate) |

The whole code itemizing is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

enc_vocab_size = 20 # Vocabulary dimension for the encoder dec_vocab_size = 20 # Vocabulary dimension for the decoder

enc_seq_length = 5 # Most size of the enter sequence dec_seq_length = 5 # Most size of the goal sequence

h = 8 # Variety of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_ff = 2048 # Dimensionality of the internal totally related layer d_model = 512 # Dimensionality of the mannequin sub-layers’ outputs n = 6 # Variety of layers within the encoder stack

dropout_rate = 0.1 # Frequency of dropping the enter items within the dropout layers

# Create mannequin training_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, dropout_rate) |

You might also print out a abstract of the encoder and decoder blocks of the Transformer mannequin. The selection to print them out individually will permit you to have the ability to see the small print of their particular person sub-layers. So as to take action, add the next line of code to the __init__() technique of each the EncoderLayer and DecoderLayer lessons:

|

self.construct(input_shape=[None, sequence_length, d_model]) |

Then you should add the next technique to the EncoderLayer class:

|

def build_graph(self): input_layer = Enter(form=(self.sequence_length, self.d_model)) return Mannequin(inputs=[input_layer], outputs=self.name(input_layer, None, True)) |

And the next technique to the DecoderLayer class:

|

def build_graph(self): input_layer = Enter(form=(self.sequence_length, self.d_model)) return Mannequin(inputs=[input_layer], outputs=self.name(input_layer, input_layer, None, None, True)) |

This ends in the EncoderLayer class being modified as follows (the three dots underneath the name() technique imply that this stays the identical because the one which was applied right here):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

from tensorflow.keras.layers import Enter from tensorflow.keras import Mannequin

class EncoderLayer(Layer): def __init__(self, sequence_length, h, d_k, d_v, d_model, d_ff, fee, **kwargs): tremendous(EncoderLayer, self).__init__(**kwargs) self.construct(input_shape=[None, sequence_length, d_model]) self.d_model = d_model self.sequence_length = sequence_length self.multihead_attention = MultiHeadAttention(h, d_k, d_v, d_model) self.dropout1 = Dropout(fee) self.add_norm1 = AddNormalization() self.feed_forward = FeedForward(d_ff, d_model) self.dropout2 = Dropout(fee) self.add_norm2 = AddNormalization()

def build_graph(self): input_layer = Enter(form=(self.sequence_length, self.d_model)) return Mannequin(inputs=[input_layer], outputs=self.name(input_layer, None, True))

def name(self, x, padding_mask, coaching): ... |

Comparable modifications could be made to the DecoderLayer class too.

Upon getting the mandatory modifications in place, you possibly can proceed to create cases of the EncoderLayer and DecoderLayer lessons and print out their summaries as follows:

|

from encoder import EncoderLayer from decoder import DecoderLayer

encoder = EncoderLayer(enc_seq_length, h, d_k, d_v, d_model, d_ff, dropout_rate) encoder.build_graph().abstract()

decoder = DecoderLayer(dec_seq_length, h, d_k, d_v, d_model, d_ff, dropout_rate) decoder.build_graph().abstract() |

The ensuing abstract for the encoder is the next:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

Mannequin: “mannequin” __________________________________________________________________________________________________ Layer (sort) Output Form Param # Linked to ================================================================================================== input_1 (InputLayer) [(None, 5, 512)] 0 []

multi_head_attention_18 (Multi (None, 5, 512) 131776 [‘input_1[0][0]’, HeadAttention) ‘input_1[0][0]’, ‘input_1[0][0]’]

dropout_32 (Dropout) (None, 5, 512) 0 [‘multi_head_attention_18[0][0]’]

add_normalization_30 (AddNorma (None, 5, 512) 1024 [‘input_1[0][0]’, lization) ‘dropout_32[0][0]’]

feed_forward_12 (FeedForward) (None, 5, 512) 2099712 [‘add_normalization_30[0][0]’]

dropout_33 (Dropout) (None, 5, 512) 0 [‘feed_forward_12[0][0]’]

add_normalization_31 (AddNorma (None, 5, 512) 1024 [‘add_normalization_30[0][0]’, lization) ‘dropout_33[0][0]’]

================================================================================================== Whole params: 2,233,536 Trainable params: 2,233,536 Non-trainable params: 0 __________________________________________________________________________________________________ |

Whereas the ensuing abstract for the decoder is the next:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 |

Mannequin: “model_1” __________________________________________________________________________________________________ Layer (sort) Output Form Param # Linked to ================================================================================================== input_2 (InputLayer) [(None, 5, 512)] 0 []

multi_head_attention_19 (Multi (None, 5, 512) 131776 [‘input_2[0][0]’, HeadAttention) ‘input_2[0][0]’, ‘input_2[0][0]’]

dropout_34 (Dropout) (None, 5, 512) 0 [‘multi_head_attention_19[0][0]’]

add_normalization_32 (AddNorma (None, 5, 512) 1024 [‘input_2[0][0]’, lization) ‘dropout_34[0][0]’, ‘add_normalization_32[0][0]’, ‘dropout_35[0][0]’]

multi_head_attention_20 (Multi (None, 5, 512) 131776 [‘add_normalization_32[0][0]’, HeadAttention) ‘input_2[0][0]’, ‘input_2[0][0]’]

dropout_35 (Dropout) (None, 5, 512) 0 [‘multi_head_attention_20[0][0]’]

feed_forward_13 (FeedForward) (None, 5, 512) 2099712 [‘add_normalization_32[1][0]’]

dropout_36 (Dropout) (None, 5, 512) 0 [‘feed_forward_13[0][0]’]

add_normalization_34 (AddNorma (None, 5, 512) 1024 [‘add_normalization_32[1][0]’, lization) ‘dropout_36[0][0]’]

================================================================================================== Whole params: 2,365,312 Trainable params: 2,365,312 Non-trainable params: 0 __________________________________________________________________________________________________ |

This part supplies extra assets on the subject in case you are trying to go deeper.

On this tutorial, you found easy methods to implement the whole Transformer mannequin and create padding and look-ahead masks.

Particularly, you discovered:

Do you could have any questions?

Ask your questions within the feedback beneath and I’ll do my greatest to reply.

[ad_2]