Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

[ad_1]

DevSecOps practices, together with continuous-integration/continuous-delivery (CI/CD) pipelines, allow organizations to reply to safety and reliability occasions rapidly and effectively and to provide resilient and safe software program on a predictable schedule and price range. Regardless of rising proof and recognition of the efficacy and worth of those practices, the preliminary implementation and ongoing enchancment of the methodology may be difficult. This weblog publish describes our new DevSecOps adoption framework that guides you and your group within the planning and implementation of a roadmap to useful CI/CD pipeline capabilities. We additionally present perception into the nuanced variations between an infrastructure group targeted on implementing a DevSecOps paradigm and a software-development group.

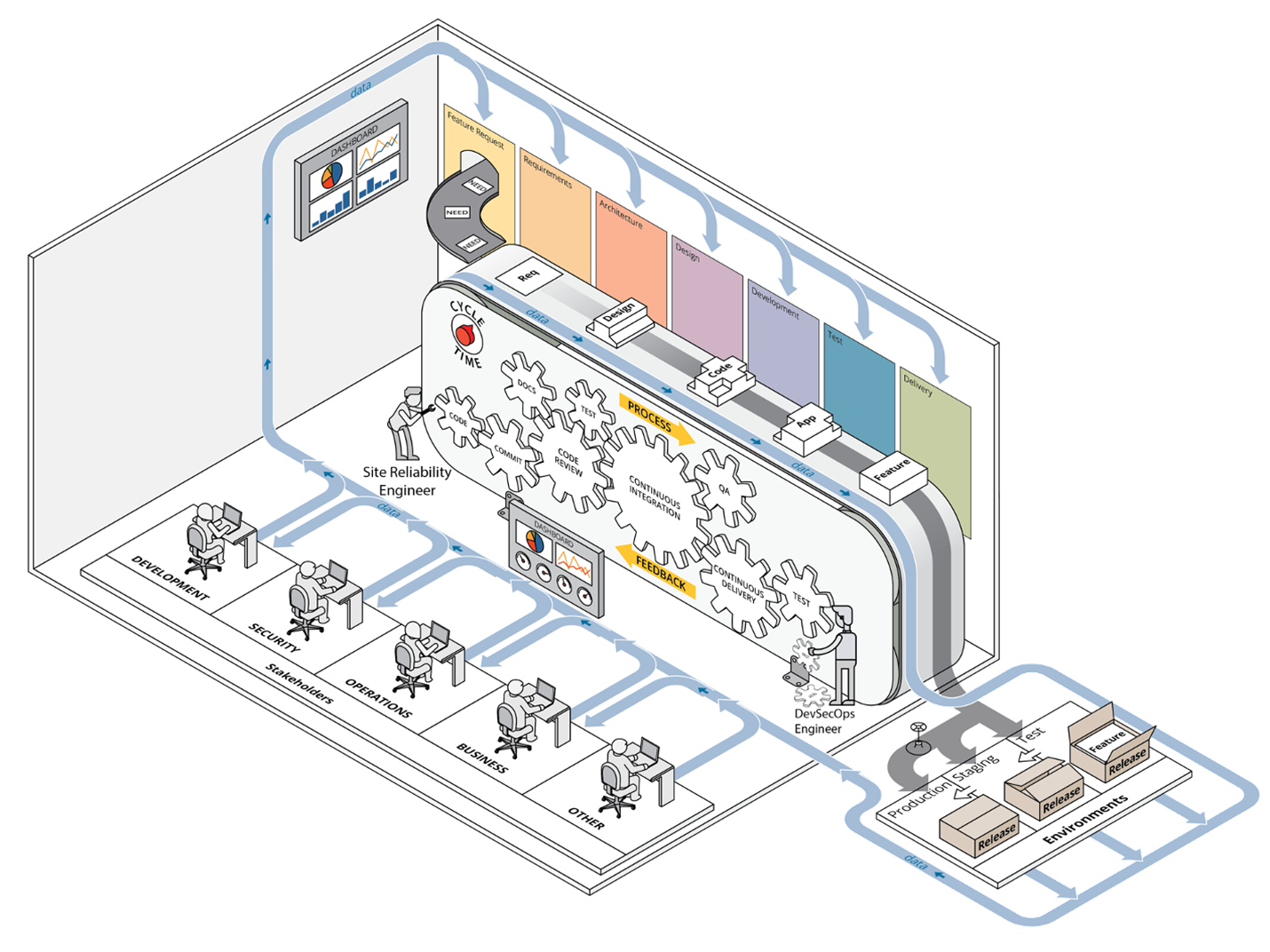

A earlier publish offered our case for the worth of CI/CD pipeline capabilities and we launched our framework at a excessive stage, outlining the way it helps set priorities throughout preliminary deployment of a improvement setting able to executing CI/CD pipelines and leveraging DevSecOps practices. Determine 1 beneath depicts the DevSecOps ecosystem, with the complete integration of all parts of a CI/CD pipeline involving stakeholders from a number of departments or teams.

Determine 1: DevSecOps Ecosystem

Our framework builds on derived rules of DevSecOps, corresponding to automation by the inclusion of configuration administration and infrastructure as code, collaboration, and monitoring. It supplies steering for making use of these DevSecOps rules to infrastructure operations in a computing setting by offering an ordered strategy towards implementing crucial practices within the phases of adoption, implementation, enchancment, and upkeep of that setting. Our framework additionally leverages automation all through the method and is particularly focused on the improvement and operations groups, generally known as site-reliability engineers, who’re charged with managing the computing setting in use by software-development groups.

The practices we define are based mostly on the precise experiences of SEI workers members supporting on-premises improvement environments tailor-made to the missions of the sponsoring organizations. Our framework applies whether or not your infrastructure is working on-premises in a bodily or digital setting or leveraging commercially out there cloud providers.

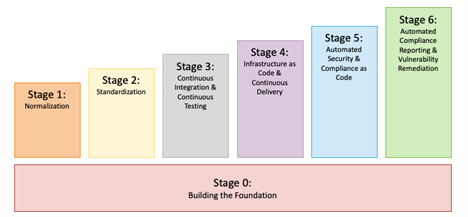

The phases of our framework, proven in Determine 2, are

0. constructing the inspiration

1. normalization

2. standardization

3. steady integration and steady testing

4. infrastructure as code (IaC) and steady supply

5. automated safety and compliance as code

6. automated compliance reporting and vulnerability remediation

Determine 2: Our DevSecOps Adoption Framework

Breaking the work down on this means ensures that effort is spent implementing fundamental DevSecOps practices first, adopted by extra superior processes later. This strategy is per the Agile follow of manufacturing small, frequent releases in help of finish customers, which on this case are software-development groups. Not solely are there dependencies within the early phases, but additionally a particular development within the complexity and issue of the practices in every stage. As well as, our framework allows adaptation to altering necessities and supplies ample alternatives for downside fixing as a result of many items of {hardware} and software program have to be built-in collectively to realize the aim of implementing a totally automated CI/CD pipeline.

Our framework addresses technical actions that can almost definitely be applied by technical workers. It doesn’t particularly tackle the group’s cultural modifications that will even be required to efficiently transition to DevSecOps. Such cultural shifts inside organizations are difficult to implement and require a distinct set of abilities and organizational mettle to implement than the practices in our technical framework. Whilst you might observe some overlap within the technical and cultural practices—as a result of it’s arduous to separate the 2 completely—our framework focuses on the technical practices that allow your DevSecOps ecosystem to evolve and mature. To learn extra about spearheading a profitable cultural shift, learn this weblog publish.

The next sections describe practices we contemplate as key to every stage, based mostly on our experiences deploying, working, and sustaining computing infrastructure in help of improvement groups. A standard theme throughout all of the phases is the significance of monitoring. There are myriad monitoring options, each handbook and computerized. Paying shut consideration to a group’s present scenario is invaluable to creating sound choices. Your monitoring strategies should subsequently evolve alongside along with your DevSecOps and CI/CD capabilities at every stage.

We’ve numbered this Stage 0 as a result of it’s a prerequisite for all CI/CD actions, although it doesn’t straight include practices particularly associated to CI/CD pipelines.

Earlier than you possibly can have any capacity to construct a pipeline, you could have collaboration instruments in place, together with a wiki, an issue-tracking system, and a version-control system. It’s essential to have your identity-management system applied accurately and be able to amassing logs of system and utility occasions and observing the well being of your collaboration instruments. These are the primary steps to enabling strong monitoring capabilities in later phases. For extra details about the method of deploying a computing setting from scratch in an on-premises or co-located information middle, learn our technical word.

This stage focuses on getting organized via the adoption of DevSecOps practices and the minimization of redundancy (corresponding to when performing database normalization). Encourage (or require) groups accountable for the deployment and operation of your computing setting to make use of the collaboration instruments you arrange in stage 0. The normalization stage is the place builders begin monitoring code modifications in a version-control system. Likewise, operations groups retailer all scripts in a version-control system.

Furthermore, everybody—the groups managing the infrastructure and the event groups they help—begins monitoring system points, bugs, and person tales in an issue-tracking system. Any deployments or software program installations that aren’t scripted and saved in model management needs to be documented in a wiki or different collaborative documentation system. The infrastructure group also needs to doc repeatable processes within the wiki that can’t be automated.

On this stage, try to be cognizant of the variables inside your setting that might be redundant. For instance, you need to start to restrict help of various working system (OS) platforms. Every supported OS provides a big burden with regards to configuration administration and compliance. Builders ought to develop on the identical OS on which they’ll deploy. Likewise, operations groups ought to use an OS that’s suitable with—and supplies good help for—the techniques they’ll be administering. Make sure to monitor different affordable alternatives to remove overhead.

This stage focuses on eradicating pointless variations in your setting. Be sure that your infrastructure and pipeline parts are properly monitored to take away the massive variable of questioning whether or not your techniques are wholesome. Infrastructure monitoring can embrace automated alerts relating to system points (e.g., low disk-space availability) or periodically generated experiences that element the general well being of the infrastructure. Outline and doc in your wiki normal working procedures for widespread points to empower everybody on the group to reply when these points come up. Use constant system configurations, ideally managed by a configuration-management system.

For instance, all of your Linux techniques may use the identical configuration settings for log assortment, authentication, and monitoring, it doesn’t matter what providers these techniques present. Scale back the complexity and overhead of working your computing setting by adopting normal applied sciences throughout groups. Specifically, have all of your improvement groups use a single database product on the again finish of their functions or the identical visualization software for metrics gathering. Lastly, institute units of ordinary standards for the definition of finished to make sure that groups have accomplished all mandatory work to contemplate their duties absolutely full. Along with monitoring the infrastructure, proceed monitoring remaining alternatives to normalize and standardize software utilization throughout groups.

This stage focuses on implementing steady integration, which allows the potential of steady testing. Steady integration is a course of that frequently merges a system’s artifacts, together with source-code updates and configuration objects from all stakeholders on a group, right into a shared mainline to construct and check the developed system. This definition can simply be expanded for operations groups to imply frequent updates to configuration-management settings within the version-control system. All stakeholders ought to continuously replace documentation within the groups’ wiki areas and in tickets when applicable, based mostly on the kind of work being documented. This strategy allows and encourages the codification and reuse of deployment patterns helpful for constructing functions and providers.

Modifications made to a codebase within the version-control system ought to then set off construct and check procedures. Steady testing is the follow of working builds and exams every time a change is dedicated to a repository and needs to be a normal follow for software program builders and DevSecOps engineers alike. Testing ought to embody all sorts of modifications, together with code for software program tasks, shell scripts supporting system operation, or infrastructure as code (IaC) manifests. Steady testing lets you get frequent suggestions on the standard of the code that’s dedicated.

Unit, useful, and integration exams ought to all be triggered when code is dedicated. You need to monitor the outcomes of your automated exams to acquire correct suggestions about whether or not the builds had been profitable. Likewise, these exams improve confidence that code is working accurately earlier than pushing it into manufacturing.

Our expertise exhibits that it’s simple to overlook sure failure modes and move non-functioning code, notably whenever you’re not diligent about including error checking and testing for widespread failures. In different phrases, your monitoring techniques have to be mature and sturdy sufficient to speak when issues are occurring with the modifications made to infrastructure or code. Furthermore, groups’ definition-of-done standards ought to evolve to make sure that normal practices additionally evolve based mostly on particular person and group experiences to keep away from widespread failure modes from occurring continuously.

This stage accelerates your automated deployment processes by integrating infrastructure as code (IaC) and steady supply. IaC is the potential of capturing infrastructure deployment directions in a textual format. This strategy lets you rapidly and reliably deploy elements of your setting on both a bare-metal server, a digital machine, or a container platform.

Steady supply is the follow of routinely making use of modifications (options, configurations, bug fixes, and so forth.) into manufacturing. IaC promotes setting parity by eliminating creeping configuration modifications throughout techniques and environments that might produce completely different outcomes. The chief good thing about utilizing IaC and steady supply collectively is reliability. The IaC functionality can improve confidence in your setting deployments. Likewise, the continuous-delivery functionality will increase confidence in your automated change supply to these environments. Launching these two capabilities creates many potentialities.

Because you began leveraging automated testing in Stage 3, Stage 4 lets you implement the requirement that every one exams achieve success earlier than modifications are deployed into manufacturing. In flip, this lets you

At this level, your infrastructure and the product below improvement turn into a tightly built-in system that lets you absolutely perceive the general state of your system. To repeat a key follow from the earlier stage, all these potentialities are enabled by a sturdy monitoring system. In truth, advancing previous this stage into the subsequent stage is nearly inconceivable and not using a steady monitoring functionality that rapidly and precisely supplies details about the state of the system and the system’s parts.

This stage advances your automation capabilities past infrastructure and code deployments by including safety automations and compliance as code. Compliance as code means you’re monitoring your compliance controls in scripts and configuration administration in order that instruments can routinely make sure that your techniques are compliant with relevant rules and situation an alert when non-compliance is detected. These efforts mix the work of the safety groups and the DevSecOps groups as a result of your safety groups will probably outline necessities for the safety controls, which supplies the chance for technical folks to automate adherence to these controls. There are various software program items that come collectively to make this work, so you really want the earlier 4 phases in place earlier than endeavor this effort on this stage.

One software that’s important on this stage is a devoted vulnerability-scanning software. You’ll additionally need your monitoring system to alert the right set of individuals when safety points are detected routinely. Different instruments may be leveraged to routinely set up system and utility updates and to routinely detect and take away pointless software program.

This remaining stage is the last word in supporting DevSecOps ecosystems as a result of now that you just’ve automated your system configurations, testing, and builds, you possibly can deal with automating safety patches and steady suggestions via automated report technology or notifications throughout DevSecOps pipelines. Compliance with safety rules typically includes periodic evaluations and assessments, and having experiences available which were routinely generated can enormously cut back the hassle required to arrange for an evaluation. Precisely what experiences needs to be generated relies on your particular setting, however a couple of examples of helpful experiences embrace

Furthermore, new vulnerabilities typically turn into recognized at random intervals based mostly on vendor releases and analysis bulletins. The flexibility to routinely generate experiences about system standing, safety points, and the affect of latest vulnerabilities in your techniques and functions implies that your group can rapidly and effectively prioritize the work that have to be finished to make sure that your computing setting is as safe appropriately. Furthermore, automating the set up of safety patches to your techniques and software program helps to cut back the quantity of handbook effort spent sustaining compliant system and utility configurations and may cut back the variety of findings in your automated vulnerability scans. Likewise, with the ability to routinely monitor the outcomes of all these automated processes ensures that the folks in your group can step in to restore issues as they come up.

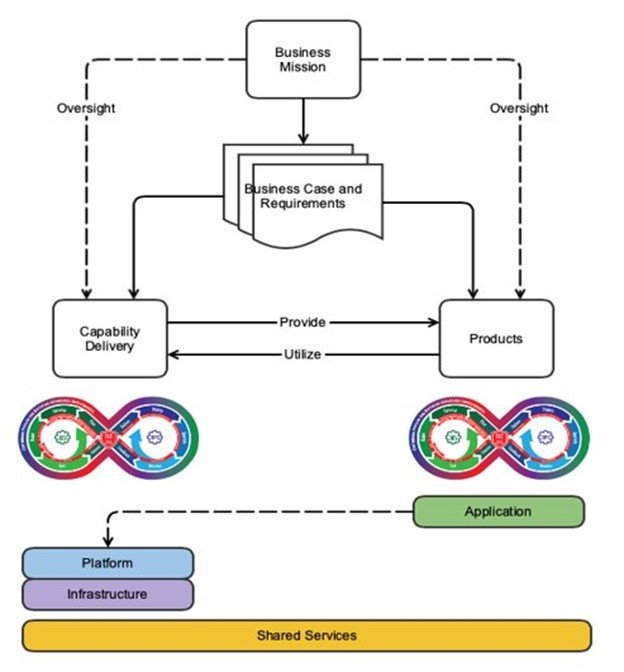

As talked about above, we created our DevSecOps adoption framework particularly to help groups that deploy and preserve computing environments upon which different groups will design and use CI/CD pipelines. This attitude is just like—however finally contrasts with—the standard use of DevSecOps practices. Determine 3 beneath focuses on the functionality supply department, whereas most builders and DevSecOps practitioners are targeted on the merchandise department.

Determine 3: DevSecOps Platform-Unbiased Mannequin

The DevSecOps Platform-Unbiased Mannequin proven in Determine 3 explores this idea at an summary stage. Each the functionality supply department and the merchandise department are designed to fulfill the mission wants of the enterprise, however their designs produce two completely different plans: a system plan and a product plan. There are two distinct plans as a result of there are two distinct entities at play right here: the pipeline/system and the product that’s being developed. Although they’re associated, they’re distinct. Right here we provide one instance of the excellence between the 2, which is the huge distance between the product operators and the builders of these merchandise.

In an instance of a DevSecOps group targeted on the merchandise department, operations personnel work to help the manufacturing setting on which the software program product is deployed. This expertise permits for attention-grabbing insights, like “Launch X brought about instability at manufacturing scale as a result of it elevated CPU utilization by 50 %.” This perception can then be rapidly and effectively fed again to the builders who can work to deal with the underlying situation. In different phrases, the operations personnel and the event personnel are related intently via the DevSecOps suggestions loop. In the end, builders, safety personnel, and operational personnel are intently knit by a standard enterprise mission and work towards a standard aim of offering the very best software program product.

Conversely, the DevSecOps groups targeted on the functionality supply department are accountable for the operation and upkeep of a improvement computing setting, they usually work to offer entry to a big variety of instruments to varied stakeholders. The event personnel require entry to improvement instruments and software program libraries. The safety personnel require entry to vulnerability-scanning instruments and reporting instruments. The operations personnel themselves require entry to monitoring instruments, visualization instruments, and hardware-management instruments.

Few if any of those instruments are developed in-house—as an alternative they’re both open-source or commercial-off-the-shelf instruments. This dynamic supplies an vital separation of the operations group from the product-development group. In distinction to the standard situation described above, when the operations group notices {that a} new launch breaks one thing of their setting, they’ll attraction to the builders of the product for a repair that might get applied if it impacts sufficient clients. In different phrases, the operations groups don’t collaborate with the event groups below the identical enterprise mission, however as an alternative are merely clients of the distributors who present the merchandise in use.

What’s extra, when the unpredictability of infrastructure failures and person hassle tickets are added, they’ll disrupt the Agile practices of backlog grooming, dash planning, and the metric of velocity. These dynamics are completely different from a pure DevSecOps technique they usually could also be uncomfortable for folks used to working in a improvement setting, the place the whole group is targeted 100% of the time on a single mission. However with a couple of diversifications to that conventional DevSecOps mannequin, this framework may be utilized to offer the advantages of DevSecOps practices in an operationally targeted setting.

The aim of implementing a resilient computing setting that may help a DevSecOps ecosystem is formidable, in the identical means that “develop working software program” is an formidable—but important—aim. Nonetheless, the advantages of implementing DevSecOps practices—together with CI/CD pipelines—typically outweigh the prices of these difficulties.

Correct planning on the early phases could make the later phases simpler to implement. Our framework subsequently advocates for breaking the method down into small, manageable duties, and prioritizing repeatability first, automation second. Our framework orders the duties in a logical development in order that the basic constructing blocks for automation are put in place earlier than the automation itself.

The development via the phases will not be a one-time effort. These practices require ongoing effort to maintain your improvement setting and software program merchandise updated with the newest in technological developments. It’s best to periodically consider your present practices towards the out there information, instruments, and sources, and regulate as essential to proceed to help your group’s mission in the simplest means attainable.

[ad_2]